Sector Driven Evaluation Strategy

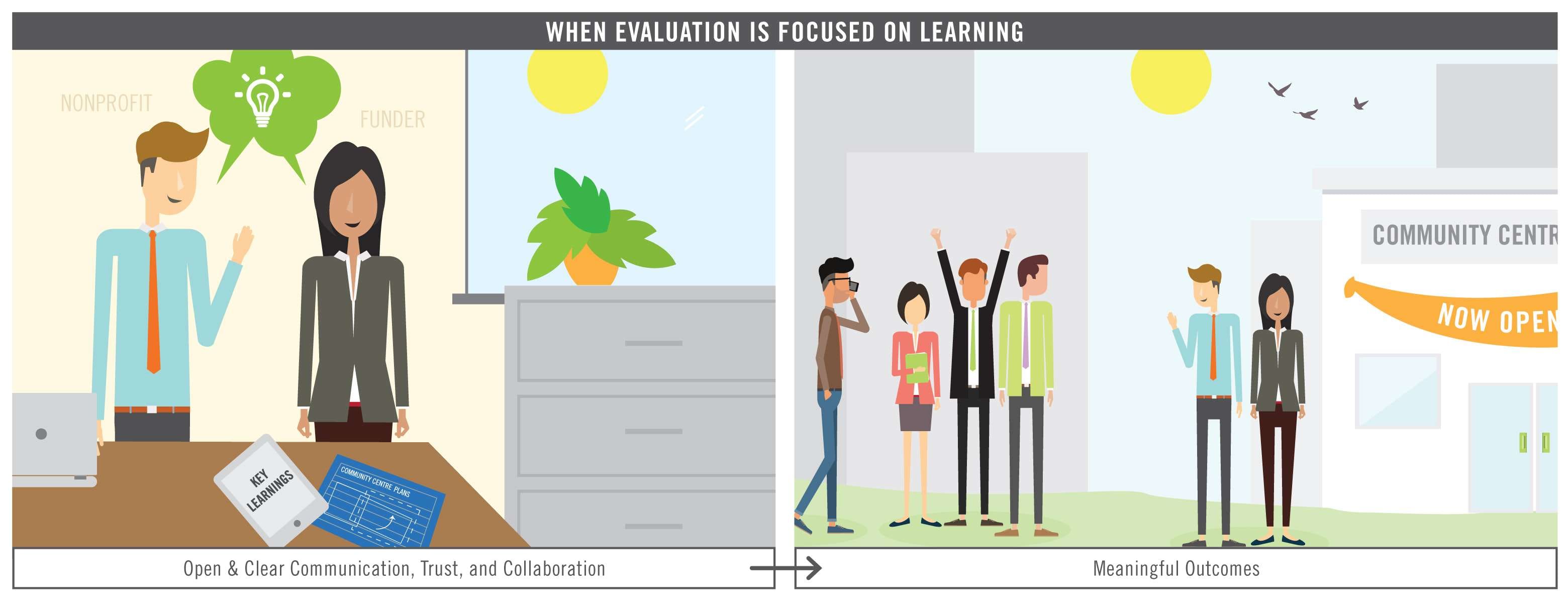

The nonprofit sector needs an evaluation system that addresses questions that really matter. Fundamentally, the sector needs a system that makes it easier, more rewarding, and less stressful for nonprofits and their partners to do meaningful evaluation work.

While there is a lot of great evaluation work that takes place in the nonprofit sector in Ontario, the conversation has tended to focus on the how to and emphasized things like workshops and toolkits as a way to improve. While those are important approaches, we must also look at the question of why and return to the original reasons for and purposes of evaluation.

ONN Podcasts

Episode 1 (April 4, 2016)

Interviewee: Chanel Grenaway, Canadian Women’s Foundation

Music: Podington Bear

Description: In our first evaluation podcast, Andrew and Ben sit down with Chanel Grenaway from the Canadian Women’s Foundation (CWF). Tune in to hear the story of how evaluation is making a difference for the CWF and their grantees.

Episode 2 (January 31, 2017)

Interviewee: Marie Zimmerman, Hillside Festival

Music: Podington Bear

Description: In our second evaluation podcast, Andrew and Ben sit down with Marie Zimmerman from Hillside Festival to chat evaluation and how it benefits the festival and its patrons.

Episode 3 (May 25, 2017)

Interviewee: Jade Huguenin, Ontario Federation of Indigenous Friendship Centres (OFIFC)

Music: Podington Bear

Description: In our third evaluation podcast, Andrew and Ben chat with Jade Huguenin from the OFIFC about their work, the context of evaluation in indigenous communities, and the importance of a community driven approach.

The Propellus Podcast

“Transform Your Story” – a podcast by Propellus, the Volunteer Centre of Calgary.

Andrew Taylor explains how to make evaluation more useful, including by working with funders and creating a culture of evaluation.

Webinars, Presentations

Webinars

- Rethinking Evaluation: Developing a Strategy for the Sector, By the Sector (2016.01.27)

In this webinar, we want to hear from you! We have a few ideas for how we can start to change the way evaluation in the nonprofit sector works and we want your feedback on what we might include in a strategy (e.g. a vision and set of principles for evaluation, a negotiation guide to use with funders and other stakeholders, ways we can promote an evaluation culture, and how we can use a network approach to better share and collaborate). More specifically, we want to know what you think needs to change at a systems level and how we can change it together.

2016.01.27 Rethinking Evaluation Webinar Slides - Evaluations that Work: What the Nonprofit Sector Can Learn from ONN and Vibrant Communities (2016.06.22)

Evaluations “work” when they lead to insight and action. We all know that the process can be resource-intensive, so it is important for us to maximize the probability of getting it right! In this webinar, two leading learning institutes, the Ontario Nonprofit Network (ONN) and Tamarack’s Vibrant Communities Canada, will unpack real-life stories from Cities Reducing Poverty members to identify cases where evaluation worked really well. Together we identify how they achieved exceptional success, and top takeaway points for the nonprofit sector.

2016.06.22 Webinar Recording & Resources - Five Important Discussion Questions to Make Evaluation Useful (2016.12.07)

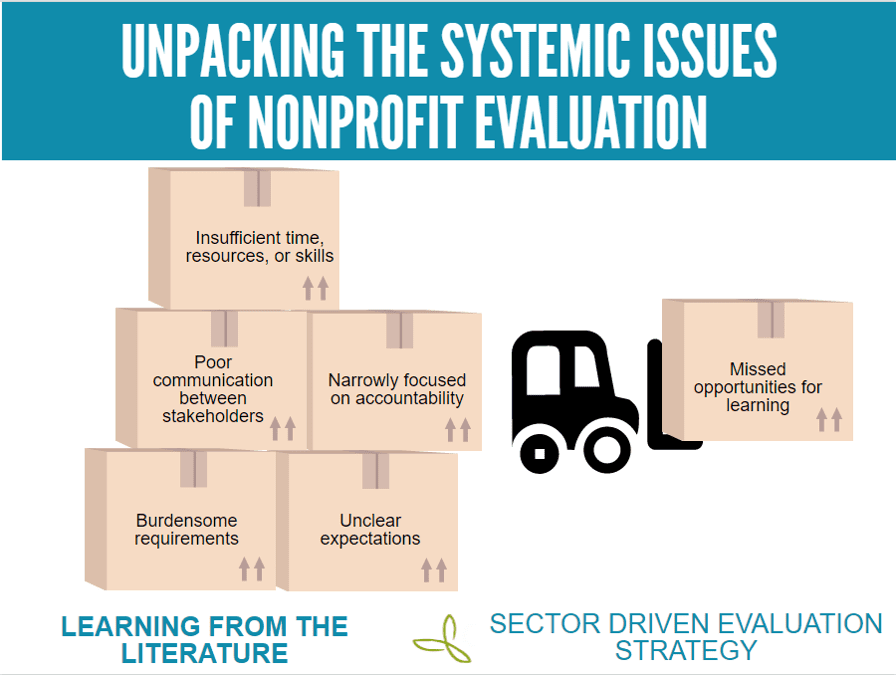

Efforts to build evaluation capacity in the nonprofit sector often begin with the assumption that the problem is lack of skill, resources, or interest by nonprofits. However, based on our research we think the problem may have more to do with the fact that the nonprofit evaluation “system” is not well designed- i.e. the ways evaluation is funded, rewarded, disseminated and used at a societal level. Join this interactive webinar to explore our brand new guide to help you get it right. We’ll present some common evaluation scenarios and walk through how you can put this guide into action to get the most out of an evaluation. We’ll push your critical thinking about the purposes that evaluation work is serving and the reasons why it sometimes fails to deliver on its promise.

2016.12.07 Five Important Discussion Questions Slides

Presentations

Adapted Ignite Presentation from AEA Conference (2016.10.28):

Blog Posts

- Building a Better Nonprofit Evaluation Ecosystem — 7 Recommendations for Cultivating Evaluations that Work – published on AEA365.org (August 2017)

- Can We Talk? Promoting better evaluation conversations between funders and nonprofits – published on AEA365.org (December 2016)

- Evaluation Agenda for the Nonprofit Sector – published on AEA365.org (February 2016)