Blog

Evaluation: Expanding design learning

Late last fall, ONN’s evaluation project took me to the American Evaluation Association’s annual conference in Atlanta, Georgia, to present alongside Andrew Taylor, ONN’s Resident Evaluation Expert.

For me, it was an important professional development opportunity (one of the seven elements of decent work) and, thinking back, it was a bit of a surreal experience. Never did I think I would be in Atlanta at an evaluation conference of 3,500 people from around the world, to talk evaluation all day, for four days straight. On the first night, I found myself having a traditional southern American dinner with 20 or so fellow Canadians from across the country. In between bites of fried chicken, sips of beer, while talking about evaluation, it occurred to me how much my professional career had changed in only a couple of years. Back then, I had no idea there even was the discipline of evaluation!

Andrew and I were there to present our work related to our recently released guide, Learning Together: Five Important Discussion Questions to Make Evaluation Useful. It was also an opportunity for me to learn more about the diverse field of evaluation.

Evaluation learnings to share with the sector

The Americans don’t do anything small. There were over 900 breakout sessions to choose from and they spanned the gamut of the evaluation field. There were three sessions that stuck with me that I wanted to share with the network.

First, one of the highlights was a session called Learning from the Original Designers of Evaluation that featured well-known experts in the evaluation field. The talk was part of a larger discussion on the big picture role of evaluation in addressing society’s challenges. In particular, Dr. Stafford Hood emphasized that evaluation can have real world consequences and is therefore something that is critically important to do — and to do right. He referenced the Gregory Porter song 1960 What? and made the link that the powerful lyrics from that song could easily, but sadly be made into a song called “2016 What?”. He stressed that we shouldn’t be afraid to confront uncomfortable learnings. In Hood’s own words, “evaluation and research can serve as a conduit for social change or serve institutional racism.” In sum, Hood argued for evaluation to be a crucial piece in pursuing social justice.

First, one of the highlights was a session called Learning from the Original Designers of Evaluation that featured well-known experts in the evaluation field. The talk was part of a larger discussion on the big picture role of evaluation in addressing society’s challenges. In particular, Dr. Stafford Hood emphasized that evaluation can have real world consequences and is therefore something that is critically important to do — and to do right. He referenced the Gregory Porter song 1960 What? and made the link that the powerful lyrics from that song could easily, but sadly be made into a song called “2016 What?”. He stressed that we shouldn’t be afraid to confront uncomfortable learnings. In Hood’s own words, “evaluation and research can serve as a conduit for social change or serve institutional racism.” In sum, Hood argued for evaluation to be a crucial piece in pursuing social justice.

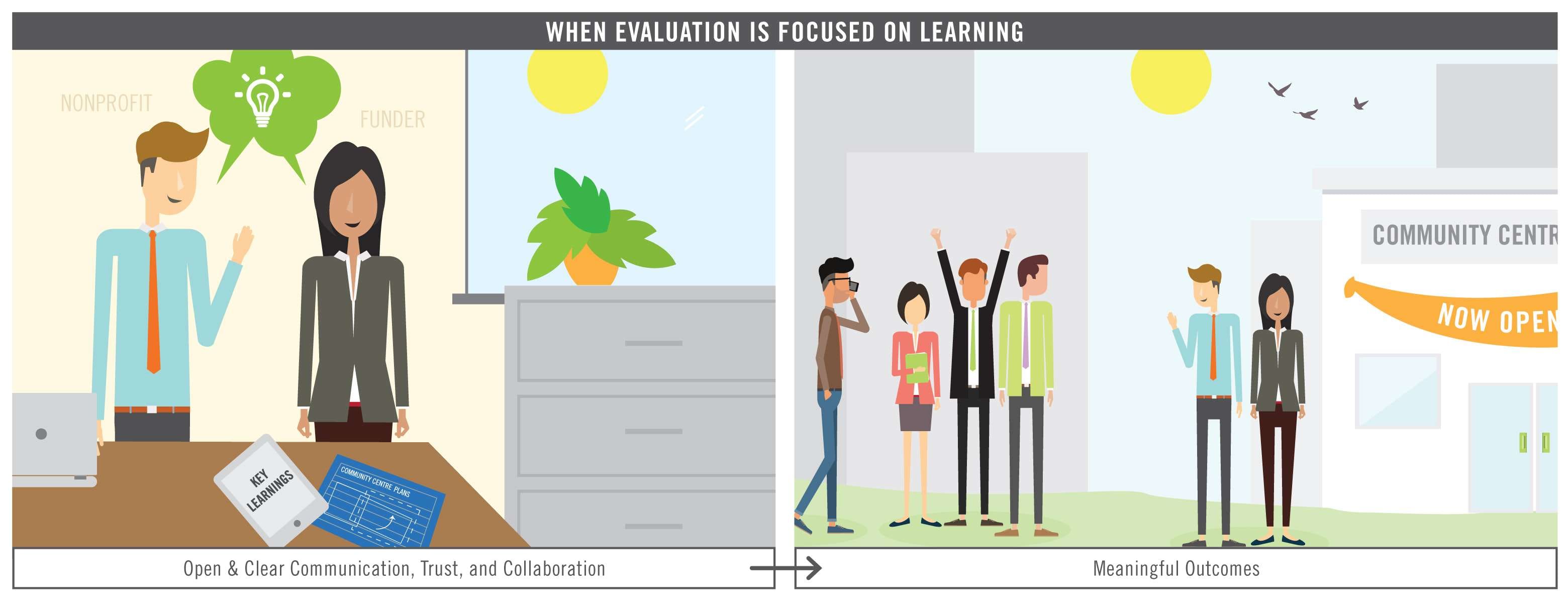

His talk, as well as those of his fellow co-presenters, was a strong reminder that evaluation isn’t only about collecting and reporting data to funders. Rather, it is about helping to get answers to important questions, make improvements or changes, and ultimately to lead to action — whatever that action may be.

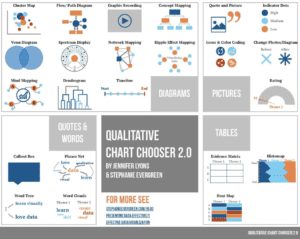

The session on Design and Visual Display of Qualitative Data and Information was another highlight. A number of presenters showed how qualitative data could be better presented and often using tools no fancier than PowerPoint or Excel. In particular, Jennifer Lyons introduced a draft of her Qualitative Chart Chooser, a very practical and easy-to-use tool, to help emphasize the messages that really matter.

The session on Design and Visual Display of Qualitative Data and Information was another highlight. A number of presenters showed how qualitative data could be better presented and often using tools no fancier than PowerPoint or Excel. In particular, Jennifer Lyons introduced a draft of her Qualitative Chart Chooser, a very practical and easy-to-use tool, to help emphasize the messages that really matter.

There were a number of sessions on data visualization at the conference and, as far as I could tell, they were some of the most popular. There is a hunger to be able to tell the story of data better than the traditional long-form report, and data visualization appears to be a growing skillset that will be in demand in the future.

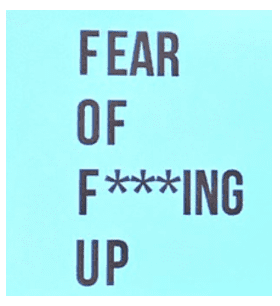

Finally, the session Learning from Evaluation Design Failures named a real fear in evaluation that we have heard about in our work at ONN. The session featured stories from three panelists about when and how they failed, the implications of that failure, and how they learned from it. Stephanie Evergreen in particular left an impression after humorously introducing the concept of FOFU, or Fear of F***ing Up. Evergreen encouraged us to “fail big, often, and in front of other people,” while other session speakers emphasized not walking away from failure or dwelling on failure for too long. The key is to critically reflect with an eye to improvement and then push on.

What made the session work was the honest nature of their admissions. The failures were real and sometimes serious and the implications weren’t sugar coated. However, from those failures came important insights. It was an important reminder that failing happens and that this is not something to be dismissed, but embraced.

Spurring on more evaluation – and professional – development

Atlanta was a great learning experience and something I likely never would have thought to do on my own. It was an important professional development opportunity and I’m grateful to my colleague Andrew for taking the lead in putting together our submission to present at the conference and to be able to attend as ONN’s staff representative.

Back in Ontario, I’ve since registered to take a course on data visualization and we’ve also made a commitment at ONN to do a better job of discussing our own failures as a team so that we can better learn how to do our work better. The conference also reminded me just how diverse the field of evaluation really is and to remember that almost any question can be an evaluative one.